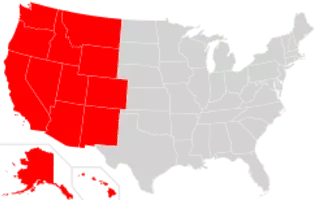

About Western United States

The Western United States is the region comprising the westernmost states of the United States. As European settlement in the U. S. expanded westward through the centuries, the meaning of the term the West changed. Before about 1800, the crest of the Appalachian Mountains was seen as the western frontier.